Just a question...

I recently upgraded my hardware because of a replacement for my old 21" CRT 1280x960 monitor with a new 24" LCD 1920x1200 monitor, being used as Monitor #2 in a 2-monitor setup. Monitor #1 is also a 24" LCD 1920x1200 monitor and remains unchanged.

To properly support the second digital monitor, I upgraded the video card from a DVI/VGA HD4850 to a DVI/DVI HD5770.

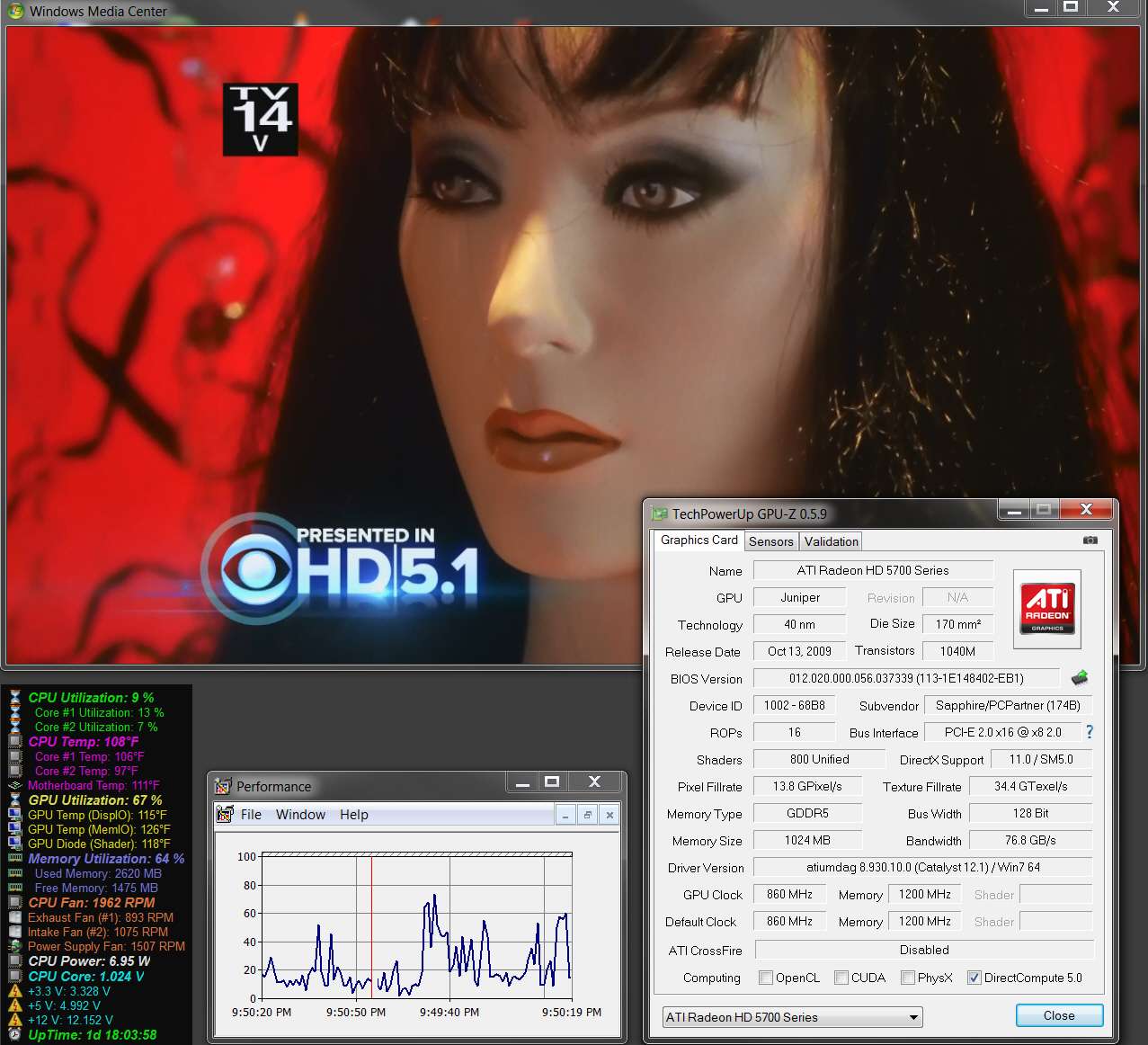

When I was using the HD4850 card in the machine Aida64 reported about 20% GPU utilization when I was watching 1080i HDTV (in a window on monitor #1). Now, with the HD5770, Aida64 reports much much higher GPU utilization... around 65%. GPU utilization is lower when watching 720p, so I'm certain what I'm seeing is a reasonably accurate representation of true hardware utilization related to HDTV.

It's hard to tell about ordinary CPU utilization when watching these HDTV programs, as it was and is relatively low. So if it's now lower (because the HD5770 hardware is taking on the load), it's not that obvious. Even with the HD4850 there was not a high CPU utilization when watching HDTV.

I don't know if this is anything I need to be concerned about, or whether this is good or bad, or whether this is simply an indication that the HD5770 hardware is actually performing much more of the HD-related processing whereas with the HD4850 that same required processing was handled by software in the drivers.

So... can anybody enlighten me as to whether what I'm seeing is perfectly normal and to be expected? Could this simply be a different way that Aida64 sees "GPU Utilization" from the HD5770, and is perhaps just more accurate than it was with the HD4850?

I recently upgraded my hardware because of a replacement for my old 21" CRT 1280x960 monitor with a new 24" LCD 1920x1200 monitor, being used as Monitor #2 in a 2-monitor setup. Monitor #1 is also a 24" LCD 1920x1200 monitor and remains unchanged.

To properly support the second digital monitor, I upgraded the video card from a DVI/VGA HD4850 to a DVI/DVI HD5770.

When I was using the HD4850 card in the machine Aida64 reported about 20% GPU utilization when I was watching 1080i HDTV (in a window on monitor #1). Now, with the HD5770, Aida64 reports much much higher GPU utilization... around 65%. GPU utilization is lower when watching 720p, so I'm certain what I'm seeing is a reasonably accurate representation of true hardware utilization related to HDTV.

It's hard to tell about ordinary CPU utilization when watching these HDTV programs, as it was and is relatively low. So if it's now lower (because the HD5770 hardware is taking on the load), it's not that obvious. Even with the HD4850 there was not a high CPU utilization when watching HDTV.

I don't know if this is anything I need to be concerned about, or whether this is good or bad, or whether this is simply an indication that the HD5770 hardware is actually performing much more of the HD-related processing whereas with the HD4850 that same required processing was handled by software in the drivers.

So... can anybody enlighten me as to whether what I'm seeing is perfectly normal and to be expected? Could this simply be a different way that Aida64 sees "GPU Utilization" from the HD5770, and is perhaps just more accurate than it was with the HD4850?

My Computer

- Computer type

- PC/Desktop

- Computer Manufacturer/Model Number

- Home-built, two systems (1) and (2)

- OS

- Windows 7 Pro x64 (1), Win7 Pro X64 (2)

- CPU

- i5-3350p 3.1Ghz/6MB-cache (1); E8400 3.0Ghz/6MB-cache (2)

- Motherboard

- ASUS P8Z77-V Pro (1); ASUS P5Q3 (2)

- Memory

- 8GB PC3-12800 DDR3 (1); 4GB PC3-10600 DDR3 (2)

- Graphics Card(s)

- ATI HD7750 (1), (see TV cards); ATI R7 250 (2)

- Sound Card

- Realtek ALC892 HD Audio (1); Realtek ALC1200 HD Audio (2)

- Monitor(s) Displays

- Eizo HD2441W LCD, Eizo S2433W (1); Eizo 24" S2433W (2)

- Screen Resolution

- 1920x1200, 1920x1200 (1); 1920x1200 (2)

- Hard Drives

- (1) 1TB SATA-II (7200RPM), 2x2TB SATA-III (7200RPM), 250GB SATA-III (10000RPM) for OS; 2x2TB external USB 3.0

(2) 320GB SATA-II (7200RPM), 750GB SATA-II (7200RPM), 150GB SATA-II (10000RPM) for OS; 2TB external USB 3.0

- PSU

- Nesteq ECS-6001 600W (1); Nesteq ECS-5001 500W (2)

- Case

- Acousti-Case 360 (1) and (2)

- Cooling

- Noctua NH-U12P SE2 for CPU, 2x120mm case fans (1) and (2)

- Keyboard

- IBM PS/2 (1) and (2)

- Mouse

- Logitech MX Revolution wireless (1); Microsoft wired (2)

- Internet Speed

- 100mbps down / 10mbps up

- Antivirus

- Microsoft Security Essentials; Malwarebyte Anti-Malware Pro

- Browser

- Firefox

- Other Info

- Ceton InfiniTV 4-tuner cablecard-enabled TV card as well as Hauppauge HVR-2250 OTA/ATSC 2-tuner TV card in (1), running under Win7 WMC